15 More ML Gems for Ruby

I’m happy to announce another round of machine learning gems for Ruby. Like in the last round, many use FFI or Rice to interface with high performance C and C++ code. Let’s dive in.

Data Frames

Rover is a data frame library, which is a popular data structure for data analysis and machine learning. It’s powered by Numo and uses columnar storage for fast operations on columns.

Forecasting

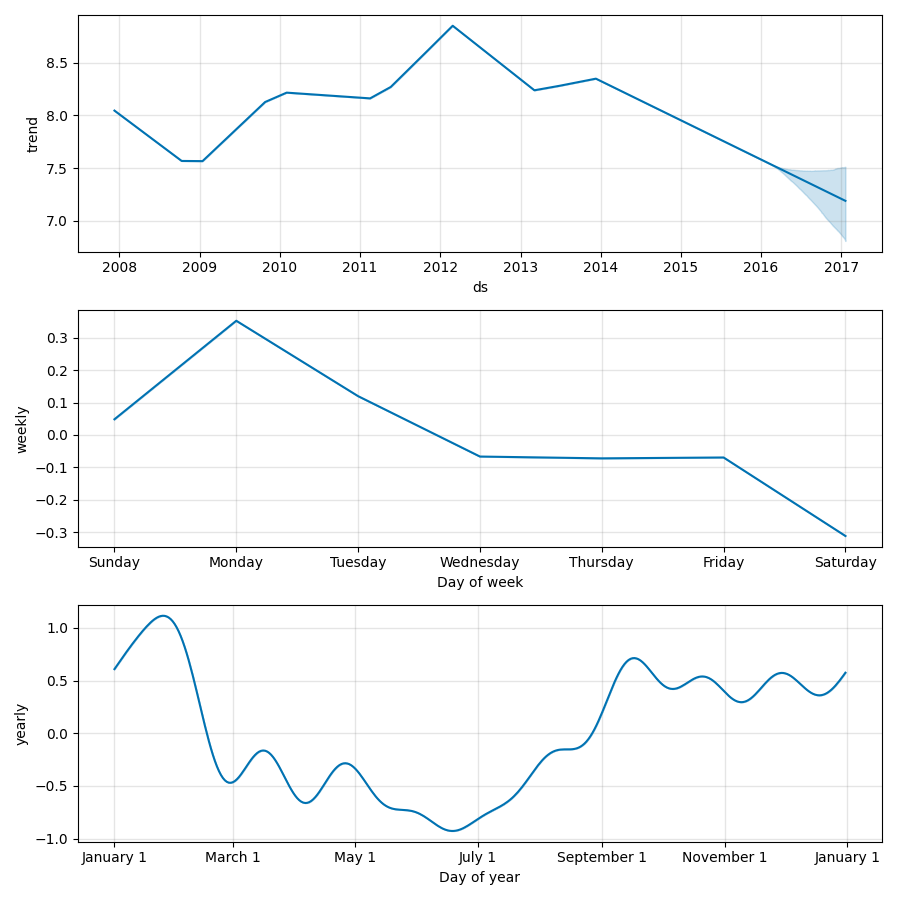

Prophet.rb is a forecasting library. It supports multiple seasonalities, holidays, growth caps, and many other features. It makes it really easy to visualize the components of a forecast.

Under the hood, Prophet uses CmdStan.rb to perform optimization and Markov chain Monte Carlo (MCMC) sampling.

Anomaly Detection

OutlierTree produces human-readable explanations for why values are detected as outliers.

Price (2.50) looks low given Department is Books and Sale is false

IsoTree uses Isolation Forest, which creates trees to split the data. Data points that become isolated in fewer splits on average are more likely to be anomalies.

MIDAS performs edge stream anomaly detection. It can find anomalies in streaming data that can be modeled as a graph, like network traffic between servers.

Natural Language Processing

MITIE does named-entity recognition. It finds people, organizations, and locations in text.

Nat works at GitHub in San Francisco.

Bling Fire performs very fast text tokenization with pre-trained models, like BERT and XLNet.

"hello world!" => ["hello", "world", "!"]

YouTokenToMe lets you train your own text tokenization model. It uses Byte Pair Encoding (BPE) for subword tokenization.

"fast tokenization!" => ["▁fast", "▁token", "ization", "!"]

Optimization

OR-Tools is an optimization library. It can be used for a wide range of tasks, like scheduling, vehicle routing, and wedding seating charts. There are higher level interfaces for a few tasks, or you can define your own problem from scratch.

Deep Learning

There are now three new deep learning libraries built on Torch.rb:

- TorchVision for computer vision tasks

- TorchText for text and NLP tasks

- TorchAudio for audio tasks

There are also new examples of generative adversarial networks.

Dimensionality Reduction

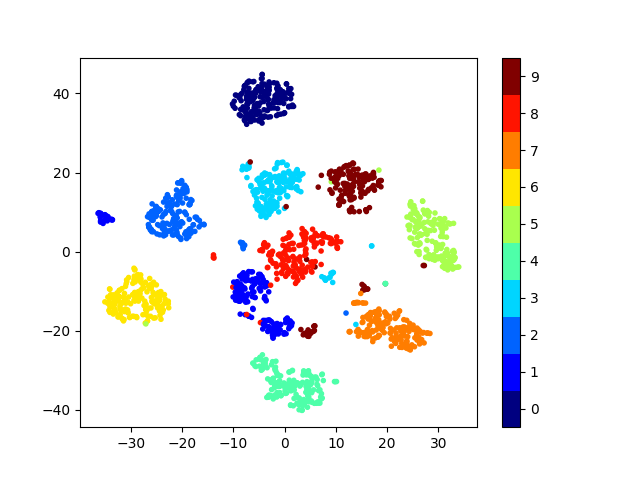

t-SNE lets you visualize high dimensional data in a low dimensional space. It uses Multicore-TSNE, which is a performant implementation. Dimensionality reduction algorithms try to preserve the structure of the data.

Here’s what the output looks like on the Optical Recognition of Handwritten Digits data set. The t-SNE algorithm has no knowledge of the labels (colors on the plot), but keeps them together remarkably well.

Similarity Search

Faiss supports approximate nearest neighbors, k-means clustering, and another dimensionality reduction technique called principle component analysis (PCA).

Non-Gems

Jupyter notebooks are a nice environment for machine learning.

ml-stack provides two Docker images to run iRuby notebooks. The standard one has Rumale, XGBoost, fastText, and a number of other gems. The deep learning one has Torch.rb and the new deep learning libraries mentioned earlier. It’s designed for GPUs, which can significantly speed up training deep learning models. You can run the images on your local machine, Binder, or Paperspace.

Closing Thoughts

Ruby is getting better for machine learning. Many popular Python libraries now have Ruby counterparts. They can be used in Ruby scripts, iRuby notebooks, and Rails applications. This should make it easier to do machine learning without leaving your favorite language.